How Counterfeiters Build Social Proof with Fake Reviews, Influencers & Verified Badge

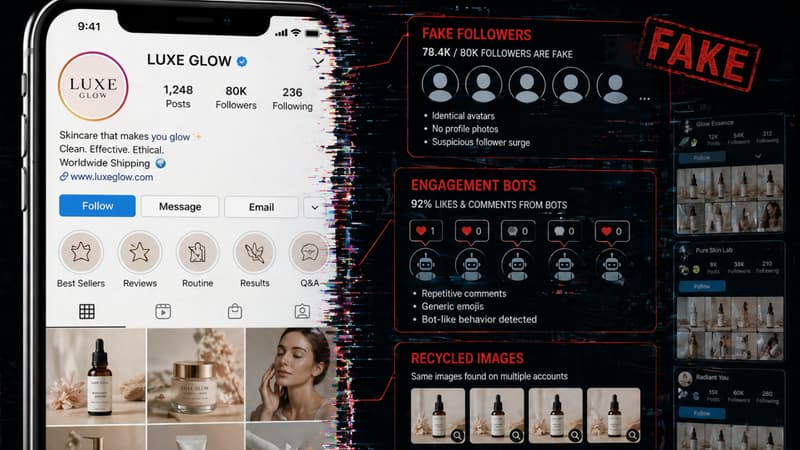

A consumer on Instagram finds a skincare brand. The account has 80,000 followers, a blue verification badge, and two recent unboxing videos from micro-influencers raving about the product. The listing on the marketplace has 4.9 stars, 340 verified purchase reviews, and photos of real people using it. Everything looks legitimate. The product is entirely fake.

Well, this is the standard operating procedure for modern counterfeit operations.

Today, counterfeiters are not just replicating products. They are replicating trust. The fake packaging, the fake label, the fake product, those are the back-end. The front-end is a carefully constructed social proof machine built to make consumers feel safe before they ever reach the product page. And in most cases, it works.

Here is exactly how they build it.

Social Proof Is Now Part of the Counterfeit Product Itself

This is normal user behaviour when they buy a product online. They glance at the star rating, scroll through a few reviews and maybe check if the brand has a social presence. If an influencer has used it, that settles the question.

Counterfeiters know this process better than most marketing teams do.

The modern consumer rarely questions a product that has a 4.8-star rating, 200+ reviews, a verified seller badge, and an influencer post behind it. So counterfeit operations have stopped competing on product quality and started competing on trust signals. The fake review, the fake influencer, and the fake badge are not afterthoughts. They are the core infrastructure.

The playbook has three pillars. Each one is designed to collapse a different layer of consumer scepticism.

How Fake Review Ecosystems Actually Work

The most effective tactic in the counterfeiters' toolkit right now is the brushing scam.

Here is how it works. A counterfeiter sets up a listing for a fake product on a marketplace. Instead of using obvious bot accounts to post reviews, they need a verified purchase status. Basically, the badge that tells the algorithm and the consumer that the reviewer actually bought the product.

To get it, they buy or steal consumer data from data breaches or the dark web. They create buyer accounts using real names and real addresses. They "buy" their own product through those accounts. Then they ship a cheap, lightweight filler item, a rubber band, an empty envelope, to generate a real courier tracking number and mark the order as delivered. With a completed delivery in the system, they can now post a detailed, photo-rich, AI-generated five-star review that carries the Verified Purchase badge.

To the platform's algorithm, this looks like a genuine sale. To the consumer, it looks like genuine feedback.

Amazon removed over 275 million suspected fake reviews in 2024 alone. Brushing scam reports rose 46% in 2025 compared to the previous year. These numbers reflect an operation that has industrialised itself.

Beyond brushing, there are review farms. These are invitation-only groups operating on Telegram, WhatsApp, and Discord, where real buyer accounts are paid or rewarded to post manipulated reviews. Because real users are leaving these reviews from real accounts, they are significantly harder to flag. And increasingly, AI tools generate the review text itself. Contextually specific, human-sounding, keyword-optimised, and produced at a scale no human team could match.

The SEO angle matters here too. Fake reviews are not just written to persuade consumers. They are written to rank. Counterfeit listings stuffed with the right product keywords outrank genuine brand listings in marketplace search results, intercepting buyers before they ever find the real product.

The Micro-Influencer Problem: Unwitting Accomplices

Counterfeiters know that bot traffic and fake badges can only go so far. They need human faces.

Micro-influencers, creators with 5,000 to 50,000 followers, are the target. The reason is straightforward. Their audiences are tight-knit and treat them like friends, not advertisers. An endorsement from a micro-influencer converts better than a celebrity post because it feels personal.

Counterfeiters approach these influencers with a simple offer. A free product in exchange for an unboxing video or a short review post. The influencer agrees. What arrives is a "superfake" (a high-quality replica packed in cloned official packaging). The influencer has no idea the product is not authentic. They genuinely believe they are reviewing the real thing. So their enthusiasm is real, their credibility is real, and the damage to the legitimate brand is real.

A 2019 study by analytics firm Ghost Data found that over 15% of posts with branded and commercial hashtags led to counterfeit products. A BBC investigation showed three prominent influencers agreeing to promote a fictional drink that contained cyanide as a listed ingredient, without checking a single detail about the product. One influencer's agent openly admitted she does not try half the things she promotes.

The Amazon lawsuit in 2020 against 13 bad actors uncovered a sharper scheme. Influencers would run an "Order This / Get This" ad on Instagram and TikTok. The "Order This" link led to a generic product on Amazon. The "Get This" visual showed a counterfeit luxury item. Consumers who clicked and purchased received the fake. The counterfeit brand name never appeared in the listing, which allowed the seller to evade detection algorithms.

Not all of this is unwitting. There is also a growing class of "dupe influencers" who knowingly promote counterfeits by reframing them as "affordable alternatives" or "reps." The language is deliberately designed to sidestep legal accountability while normalising fake purchases. Among younger consumers, especially, dupe culture has become socially accepted. That normalisation is a direct problem for brands trying to communicate authenticity.

Cloned Brand Accounts and the Fake Verified Badge

Before a consumer reaches a product listing, they often pass through social media. This is where the third pillar of the counterfeit trust machine operates.

Counterfeiters build mirror accounts (profiles that look exactly like a brand's official presence). They scrape the official brand's ad creatives, product photography, and video content. They buy bulk followers to simulate an established community. They exploit platform loopholes or purchase aged accounts that already have verification badges attached. Some go further and run paid social ads using stolen brand imagery, directing traffic to a fraudulent Shopify store.

By the time a consumer clicks the link in the bio, every trust signal has already fired. The badge, the follower count, the polished content. Their guard is completely down before the product page even loads.

The same principle applies on marketplaces. "Verified Seller" and "Top Rated" badges on Amazon, eBay, and TikTok Shop can be gamed. A seller behaves well, accumulates legitimate reviews early, earns the badge, and then pivots to selling counterfeits under that trusted status. These are called hybrid sellers, they keep genuine products in the catalogue to maintain their rating score while moving fake inventory alongside them.

Standalone fake websites followed the same pattern. Infringements on fake brand websites jumped 59% between 2022 and 2024, with a projected further increase of 70% by the end of 2025. In early 2025, counterfeit handbags and sneakers were circulating on Instagram and TikTok within 48 hours of major product launches. The speed at which these mirror operations spin up has outpaced the speed at which brands can respond manually.

The Signals That Separate Fake Engagement From Real

Brand protection teams need to shift their focus. Looking only for fake logos misses most of what is actually happening. Here is what to watch for.

1. Review velocity spikes: A new listing that collects 50 five-star reviews within 48 hours of going live is not organic. It is statistically impossible. This velocity pattern is the clearest early signal of a brushing or farm operation.

2. Linguistic echoes: Multiple reviews using identical phrasing, or hitting the exact same keyword combinations, point to AI generation with a lazy prompt. Real consumers write differently from each other.

3. Recycled media: The same user-generated photo appearing across multiple listings, multiple seller accounts, or multiple platforms. Real buyers do not coordinate their product photography.

4. Follower-to-engagement mismatch: A brand account with 80,000 followers averaging 30 comments per post has a purchased audience. Genuine communities engage at a different ratio.

5. Sudden badge acquisition: A seller with minimal history appearing with a Verified or Top Rated badge is worth examining. Hybrid sellers often earn their badge fast by selling genuine products before pivoting.

6. Geographic clustering: Reviews that all originate from accounts registered in the same region within a short time window suggest coordinated activity, not organic spread.

Why Platforms Struggle to Act on Review Fraud

Listing fraud is relatively binary. A fake logo is a clear IP violation. A takedown request has a path to resolution.

Review fraud is behavioural. Proving that a Verified Purchase review is part of a brushing operation requires connecting data points across multiple systems. The platform has to correlate the buyer account, the shipping address, the delivery confirmation, the review content, and the seller's broader network. At the scale these operations run, platforms are structurally overwhelmed.

The FTC's Final Rule on Fake Reviews, which took effect in October 2024, was a meaningful regulatory step. It bans AI-generated reviews, paid fake social indicators, and undisclosed insider reviews, with civil penalties attached. But even strong regulation does not solve the detection problem. Enforcement is reactive. The platform still has to identify the violation first.

Amazon blocked hundreds of millions of suspected fake reviews in 2025. That number tells you both that the system is trying and that the problem is enormous. Most fake listings accumulate hundreds of sales before removal. The burden of detection falls back on the brand every time.

Waiting for platforms to act is not a strategy.

Read on How to Spot Trusted Sellers and Avoid Scams

How Brands Can Fight Back

The approach has to be two-sided.

The first side is monitoring the fake front-end. Manual searches do not scale against automated review farms and mirror account networks. Brands need AI-driven monitoring across marketplaces, social platforms, rogue domains, and review clusters running simultaneously. The goal is to identify not just the counterfeit listing itself but the social proof infrastructure, like the fake accounts, the review anomalies, and the cloned profiles.

The second side is making genuine social proof structurally harder to replicate. This is where most brands fall short. They protect the product but leave the review ecosystem wide open.

The fix is to tie the review process to the physical product. When a consumer has to scan an authenticated product label to leave a verified review on a brand's direct channel, counterfeiters are permanently locked out. They cannot generate that scan from a fake product. The review that comes through is, by definition, from someone holding the real thing.

This structural separation of authentic feedback from manufactured social proof is the only durable solution. Everything else is chasing the fraud after it has already happened.

How Acviss Helps

AI and ML-powered monitoring across global marketplaces, social media platforms, and rogue domains, running continuously

Detects fake listings, cloned brand accounts, anomalous review patterns, and fraudulent seller networks

Initiates automated takedowns to remove the social proof pipeline before it reaches consumers

Non-cloneable QR codes on physical product

When review access is tied to a Certify scan, only verified, genuine buyers can contribute to the authenticated review record

Permanently separates real consumer feedback from manufactured fake social proof

Value Outcomes

Fewer fake listings and mirror accounts are active at any time

An authentic review ecosystem counterfeiters cannot infiltrate

Faster takedowns, stronger brand trust, cleaner consumer data

Frequently Asked Questions

What is a brushing scam, and how does it relate to counterfeit products?

A brushing scam is when a seller uses stolen consumer data to place fake orders for their own products, then ships cheap filler items to generate a real delivery confirmation. This gives them Verified Purchase status and allows them to post fake five-star reviews, which counterfeit sellers use to build false credibility on marketplaces.

How do counterfeiters use micro-influencers to promote fake products?

Counterfeiters send high-quality replicas with cloned packaging to micro-influencers, who typically believe they are reviewing authentic products. The influencer's genuine enthusiasm transfers credibility to the fake listing, and their followers often make purchases based on that endorsement.

What is a fake verified badge and how do counterfeit sellers get one?

Fake verified badges on marketplaces are obtained by gaming the system — sellers build legitimate ratings early through compliant behavior, earn the badge, and then pivot to selling counterfeits under that trusted status. On social media, mirror accounts acquire badges by exploiting platform loopholes or purchasing aged accounts.

What are the warning signs of fake reviews on a product listing?

Key signals include a sudden spike of five-star reviews within 48 hours of a listing going live, multiple reviews using identical phrasing, recycled product photos appearing across different listings, and geographic clustering of reviewer accounts.

Why can't marketplaces simply remove fake reviews faster?

Proving review fraud requires behavioral analysis across multiple data points — buyer accounts, shipping records, review content, and seller networks. At the volume these operations run, platforms are overwhelmed. Most counterfeit listings accumulate significant sales before removal, and the burden of detection typically falls on the brand.

How can brands protect their review ecosystem from counterfeiters?

The most effective approach combines AI-driven online monitoring to detect and remove fake social proof infrastructure, with product-level authentication that ties review access to a physical scan of an authenticated label. This makes it structurally impossible for counterfeiters to participate in a brand's genuine feedback loop.